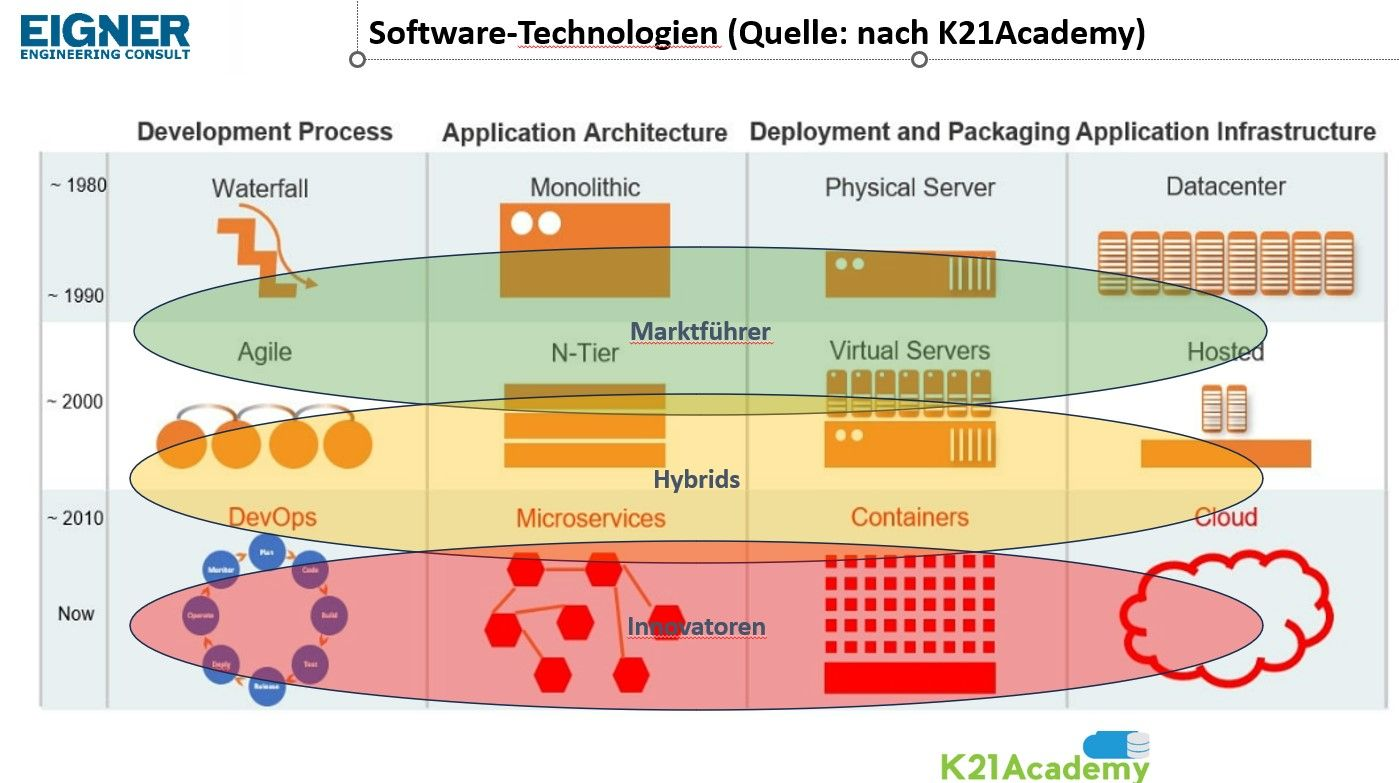

For the last few weeks, I’ve been following publications of Prof. Martin Eigner, speaking about PLM technologies and products, discussing multiple aspects of historical technological decisions taken in the past by ERP and PLM software vendors. He also brought a few very interesting and important points about complexity of PLM technological decisions that industrial companies need to take these days when approaching their decision about next generation PLM plaltlforfms. I captured the following picture in the last article. Check this out.

The picture suggested above is great and outlines the technological evolution related to software development, application architecture and infrastructure. At the same time, it is important also to check the aspects related to data modeling and data management. They are not in the picture above. I wrote an article a few years ago – PLM Data Architecture evolution.

I like the formula Prof Eigner suggested to invest in the future “One System Solution” for PLM software. Here is the passage:

I don’t like to use specific system names in posts, but here’s the exception. Let’s dream of the One-System solution. Let’s mix the Low Code engine of Aras with the functional scope of Contact, the technology of OpenBOM and the strategic and integrative importance of SAP EPD.

Next generation is such a tasty word. But it is also the most dangerous one. Every company I ever worked for had some “next generation R&D” project. It is a nature of engineering and development organization to think about next generation technologies. However, while doing it, companies always need to think about their customer demand and problems. Otherwise, we end up with the “technology looking for a problem” situations that happened to many companies in the past.

In my article today, I want to talk about technologies of entrenched PLM vendors. They are market leaders and their solutions are mature, scalable and robust. The same can be said about any previous generation technology in any field before a new breakthrough products or technologies were introduced to the market. Propeller engine aircraft were perfect before jet engine was invented. We can probably say the same about internal combustion engines looking at how all car companies are investing resources in electric vehicles.

I’d like to outline 5 gaps I can see in the existing PLM platforms and what problems cannot be solved by eliminating these gaps.

1. Multi-Tenant Architecture

A significant gap in current PLM solutions is the lack of robust multi-tenant architecture. Multi-tenancy, where multiple customers use the same software instance while keeping their data separate and secure, is crucial for cloud-based PLM solutions. Many PLM systems are still rooted in single-tenant architectures, which can lead to increased costs, reduced efficiency, and difficulty in managing updates and upgrades. While the question of cost and infrastructure is usually more on the surface when discussing multi-tenant vs single-tenant architecture, what is usually missed is an data architecture. The database models used by all market leaders today is single tenant coming back from database design of 1990s. Multi-tenant data architecture is a big showstopper to every scenario related to collaboration and communication between multiple companies (Eg. OEMs, suppliers, contractors). The transition to a full data and system multi-tenant architecture is essential for data sharing, portability, scalability, reduced overhead, and improved software maintenance.

2. Scalability in Data Management and Queries

Data management revolution of the last 20 years, introducing of new type of databases, data models, query processing and many other aspects of data organizations was part of technological foundation of global SaaS and cloud business systems. At the same time, almost none of these technological advantages can be spotted in the architecture and data models and tools of market leaders of PLM. The architecture of all leading PLM systems is RDBMS-based with flexible object modeler. These data management systems are struggling with a growing demands for data integration and query processing, applications of product configurations and query efficiency. BOM traceability and impact analysis in complex product can take long time. As the complexity and volume of data increase, we are going to see even more snuggle with existing PLM architectures. At the same time, the ability to efficiently handle large datasets and perform complex queries in real-time is critical for informed decision-making. Modern products with their multi-disciplinary product data organization (mechanical, electronics, software) multiplied with extended lifecycle (maintenance and support) are going to be killers for existing SQL database models.

3. Limited Data Analytics

Another area where PLM technologies fall short is in leveraging data science and advanced analytics. While PLM systems gather vast amounts of data throughout the product lifecycle, their capacity to analyze this data to get any type of insight or intelligent decision making is often limited. The basic capabilities of most PLM platforms are limited to RDBMS/SQL foundation. I’m not talking about additional tools and services that capable to retrieve the data from PLM database for the purpose of search, analytics, etc., but about native ability of PLM data management layer. An opportunity to use more efficient and semantically rich query can open functionality today not available for existing PLM platforms. Integrating advanced analytics, machine learning algorithms, and AI capabilities can transform PLM systems into more predictive and proactive tools, offering deeper insights into product performance and customer needs.

4. Integration Capabilities

Integration capabilities are crucial for PLM systems to function effectively within the broader enterprise technology landscape. Historically, PLM software demanded high level of integrations between PLM tools (database) and other engineering applications and enterprise systems. Most of these integrations were simply “synchronizing” data between systems. The main challenges are related to complexity and robustness of these integrations. And the reality is that majority of these integrations were done by accessing data directly in RDBMS via SQL queries. The availability of robust APIs is limited and when it comes to complex scenarios of data retrieval or data changes might not be available at all. The lack of scalable and robust integration leads to scenarios where companies are looking into migrating data between systems instead of re-using data. The last will improve the flow of information across different business functions and achieving a cohesive and efficient business process.

5. Data Modeling Flexibility

The overall robustness of product lifecycle management was always measured by the ability of product data management to model data for product development process. Each company (even belonging to the same industry) has slightly different approach in product lifecycle, document management and process management. It requires definition of data capable to connect processes and software tools together for designing and building products. PLM flexibility in data modeling was always limited by capabilities of object modelers PLM software implemented using SQL databases. While newer PLM tools developed good ORM (object relational modelers), the overall data management flexibility is limited compared, for example, to modern data management tools (eg. graphs and semantic models). At the same time, the ability to adapt data models to specific business needs and industry requirements is essential for the effective management of product data.

Is there real need for new technologies in PLM?

This is a question you can hear quite often. One of the opinion is that PLM technologies are mature enough and the biggest PLM gap is in aligning people with technologies and helping organizations to transform themselves, so they will be able to adopt existing PLM tools and methods. This idea has some merit, because educational gap in data and process management in huge. I posted about it in my PLM unstoppable playbook posts. At the same time, new tech that can turn some of the processes upside down and leverage data intelligence for decision making are indisputably more powerful and can change the landscape of PLM development we have now.

Conclusion

Existing PLM platforms from leading vendors are mature and robust for the level of technological development, but limited by the same technologies. These RDBMS models and technologies will continue to advance and we can expect another advancement in RDBMS to continue coming. But these technologies and advancements can not change fundamentals and existing trajectory of their capabilities Therefore PLM platforms will be limited to PLM ORM (object relations models) developed by existing vendors using SQL databases. Tenant model is another piece of limitations that is heavily underestimated by companies. A robust multi-tenant data model can help to deploy solutions with a fraction of cost and different level of magnitude in performance, flexibility and data sharing. We have the examples of these models already and the results they can produce. Analytical model and future AI tools can leverage real time data available for a large network for customers (eg. OEM+suppliers) instead of synchronizing data between multiple single tenant databases. Brining new technologies will eventually disrupt existing mature platforms. By adopting new technologies PLM systems can be transformed into more efficient, flexible, and insightful tools, driving innovation and competitive advantage in the dynamic market landscape. Just my thoughts…

Best, Oleg

Disclaimer: I’m co-founder and CEO of OpenBOM developing a digital-thread platform with cloud-native PDM & PLM capabilities to manage product data lifecycle and connect manufacturers, construction companies, and their supply chain networks. My opinion can be unintentionally biased.

The post Are Dominant PLM Leaders Ignoring These 5 Gaps? appeared first on Beyond PLM (Product Lifecycle Management) Blog.

Be the first to post a comment.