Data is one of the most precious assets in the modern business world. Manufacturing companies are sitting on piles of data, but similar to crude oil, data by itself doesn’t do any good to companies. You need to bring the data to a digital refinery and make it accessible for business, available for analytics, and relevant to your current process. Data, which is not accessible, is like a broken clock that shows the correct time 2 times a day.

For a very long time, PLM vendors and strategists were selling the idea of a single source of truth, which resides in a PLM database located in a company server and accessed by everyone. The idea of a single source of truth is great. However, the reality of manufacturing companies makes this idea hard to implement. It is not a big secret that PLM systems are most popular with large companies and even so, the love for PLM is going significantly down as soon as you leave the doors of engineering and product development organizations.

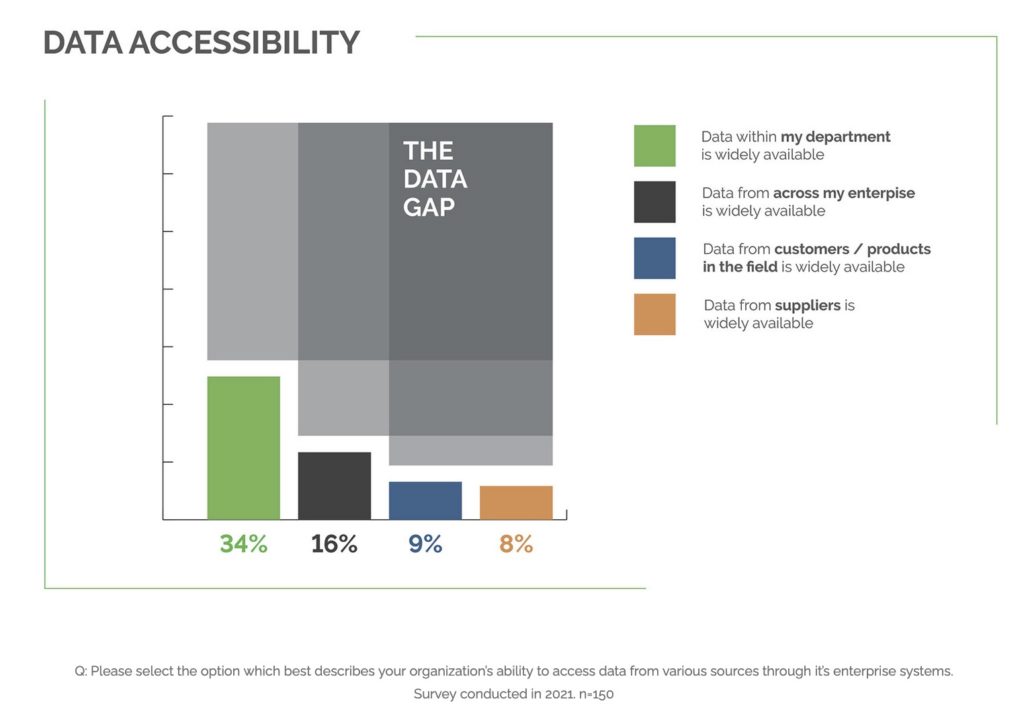

My attention was caught by an IndustryWeek article referencing the PLM leader PTC report speaking about the data gap. Navigate here and read – Too Often, the Right Data Isn’t Getting to the Right Person (Even within Their Own Team). You can find a survey here. Here is the visual representation of the data gap that gives you the best understanding of what happens.

Read the article and report, draw your conclusion. The most shocking number and passage is below. The numbers for enterprise, customers, and suppliers are even worse as you can see – 16%, 9%, and 3%.

Just over one-third of manufacturers claim, “data within my department is widely available.” This is a surprisingly low figure considering most would consider data at the departmental or team level to be seamlessly accessible to everyone. This would mean most functions in organizations are not even recognizing the value of their data internally. Engineering teams could be creating products on outdated design requirements or conflicting product iterations from multiple sources of truth.

So, how companies can solve the problem? The article recommends 3 phases – clean, connect and create a loop. While the theory of the three steps seems to be very logical, it made me think about implementation steps, and here are three things that made me think there are more troubles there than it sounds initially.

1- Data cleaning is an extremely complex process. To say “clean” is actually much harder than to say that. From my experience, customers have trouble cleaning CAD assembly with correct Part Numbers manually, which is nothing compared to asking a company to clean the data between departmental and supply chain silos. So, cleaning is the first miracle. The demand for automation must be huge.

2- Connecting data required to put data is one centralized system. PLM is certainly positioned to do so. However, it means to place data of multiple departments, companies, contractors, and suppliers together in a single PLM database belonging to… one company? That can meet some headwinds. Companies are very carefully watching their data and not ready to give up the control

3- Real-time loop is hard in distributed connected systems. The time when IT could have been running data sync overnight is over. In modern manufacturing companies, there is always (!!!) somebody who is working in a global space and 24-hours cycle is not good enough. So, what means the loop in a real-life? What technology can connect OEM, suppliers, tech centers, dealerships, and customers?

Where is the problem? The roots of the problem lie in the outdated PLM data management architecture, which segregates data in silos using technologies developed back in the 1990s. These old PLM databases are like old piston engines compared to jet technologies. PLM SQL-based databases represent more than 90% of PLM install-based, but they are designed for a single company working in isolation. When we need to connect information with departments, customers, contractors, and suppliers, we need to have a different paradigm. Such a paradigm exists – it is a multi-tenant database platform capable of separating the data between tenants but yet keep it connected in a single platform.

What is my conclusion?

There is a big data gap in data availability starting from a single department and moving downstream to companies, customers, suppliers, and contractors. In my view, the root cause for this disconnect relies on the intersection of data management technologies and communication processes. By having both to change, we can break data silos and make companies access the data in real-time. Just my thoughts…

Best, Oleg

Disclaimer: I’m co-founder and CEO of OpenBOM developing a digital network-based platform that manages product data and connects manufacturers, construction companies, and their supply chain networks. My opinion can be unintentionally biased.

The post Digital Thread, PLM Vision and Data Gaps appeared first on Beyond PLM (Product Lifecycle Management) Blog.

Be the first to post a comment.