For the last few years, I’m following the implementation of the PLM system and related infrastructure at Facebook. If you missed my earlier blog from COFES 2019, I shared a glimpse of what Facebook PLM team does. Check it here.

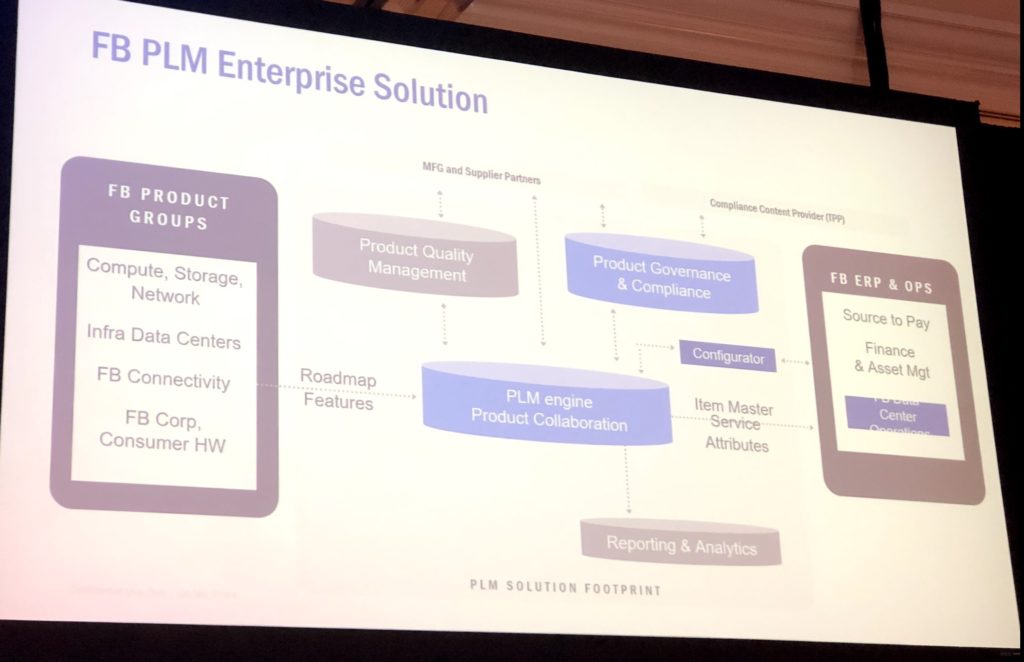

The purpose of PLM development at Facebook is to build a robust infrastructure for developing data centers. The challenge of the Facebook hyper-growth environment is to support the needs of internal Facebook hardware team. Facebook Infrastructure designs, develops and builds most of its hardware infrastructure and data centers. Operations Engineering is responsible for transitioning product development into production and delivery to our data centers. We are in the process of developing an integrated environment across field failures, manufacturing testing and yields, predictive analytics, hardware reliability, and product data management. In this presentation, we will discuss the challenges and benefits of building an integrated environment.

At ConX19, I had a chance to listen to Facebook’s Noe Gonzales and Alakshendra Khare speaking about PLM operations.They shared some very interesting details about Facebook PLM implementations.

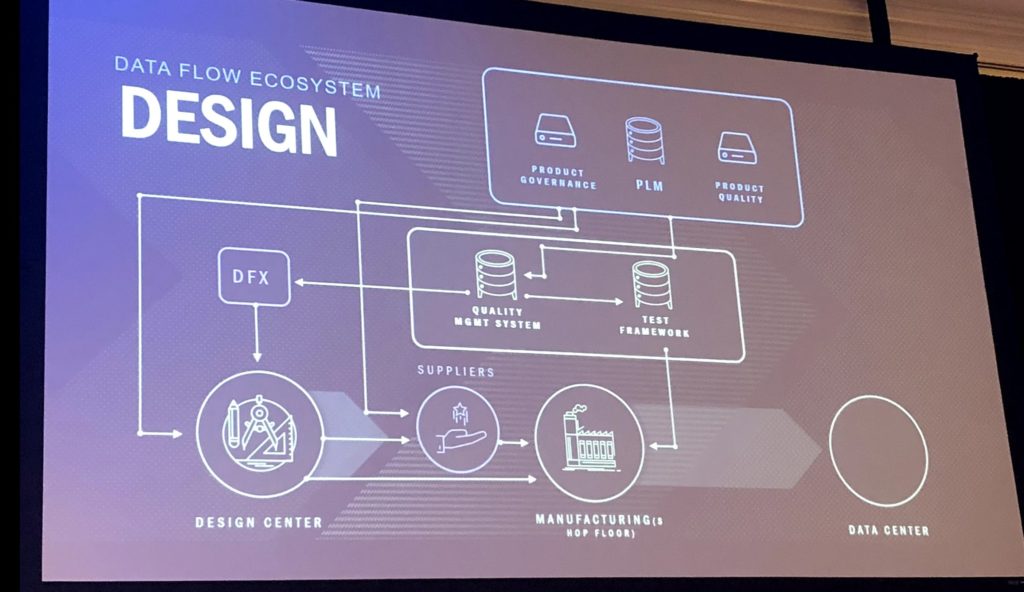

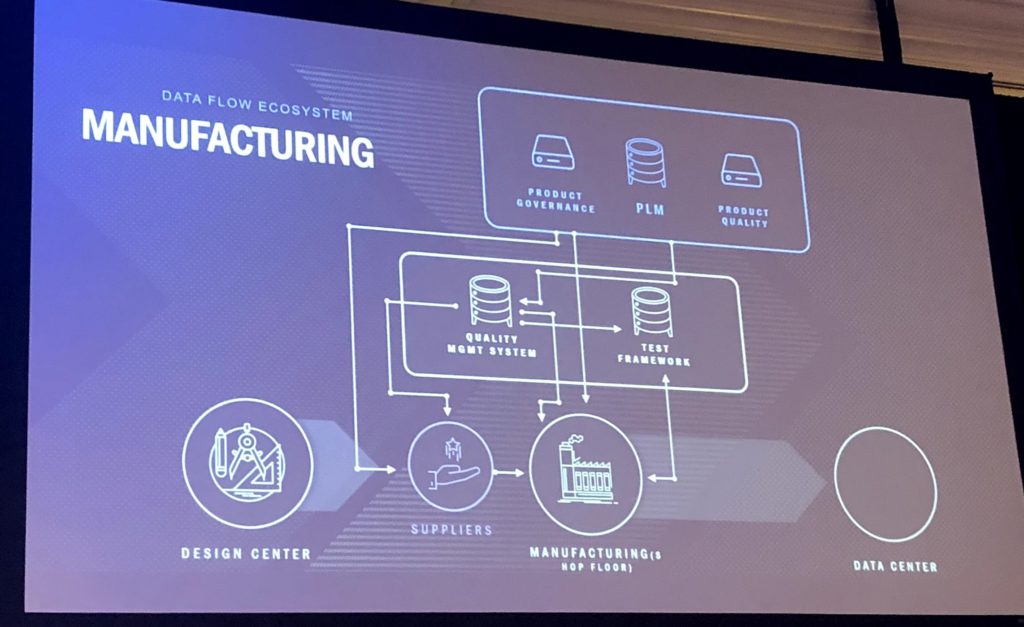

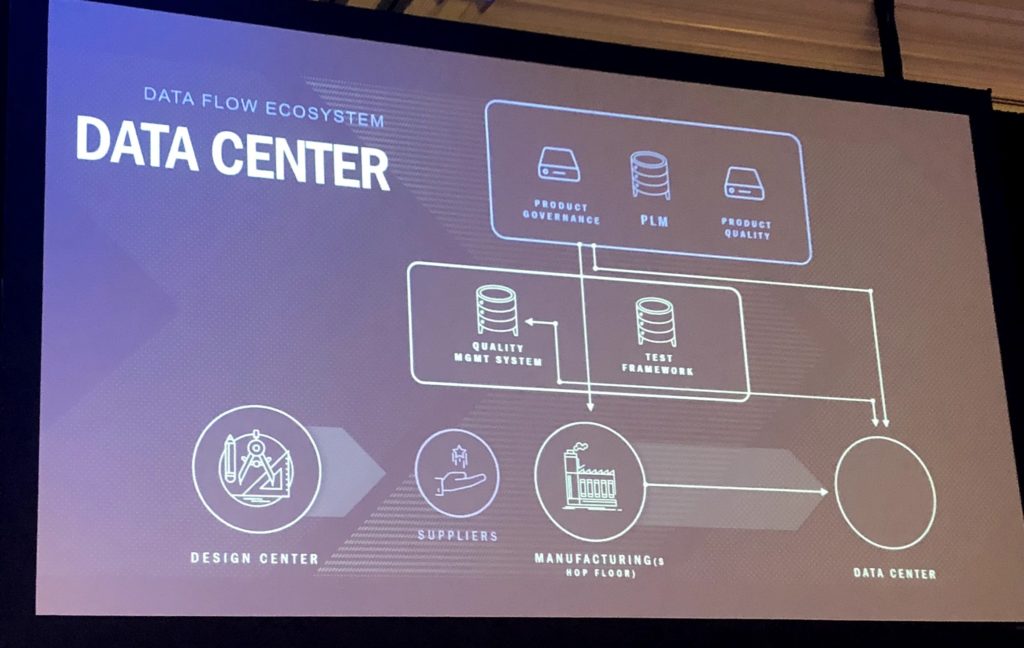

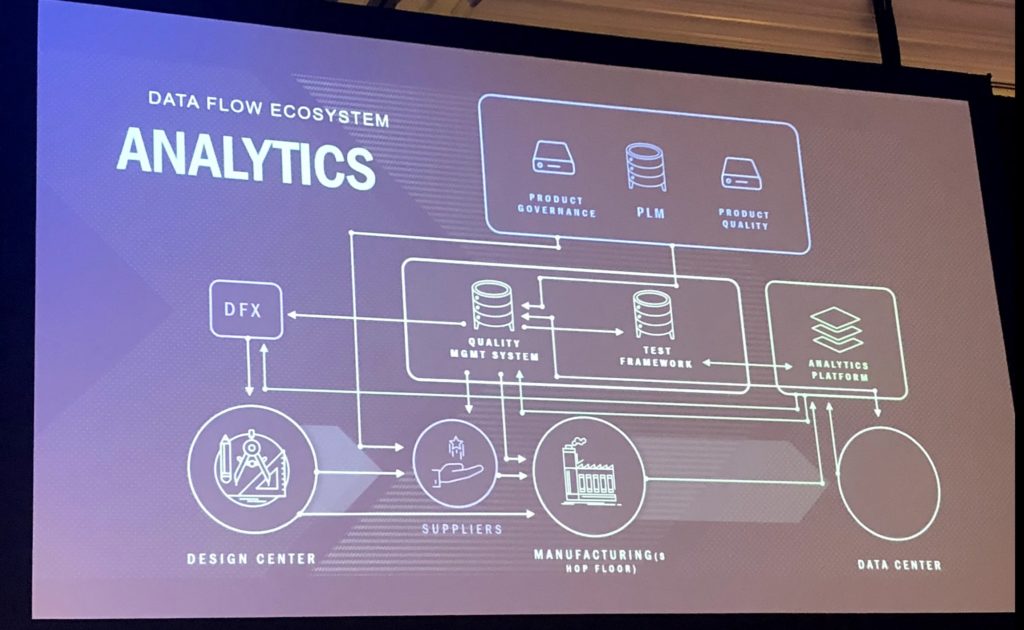

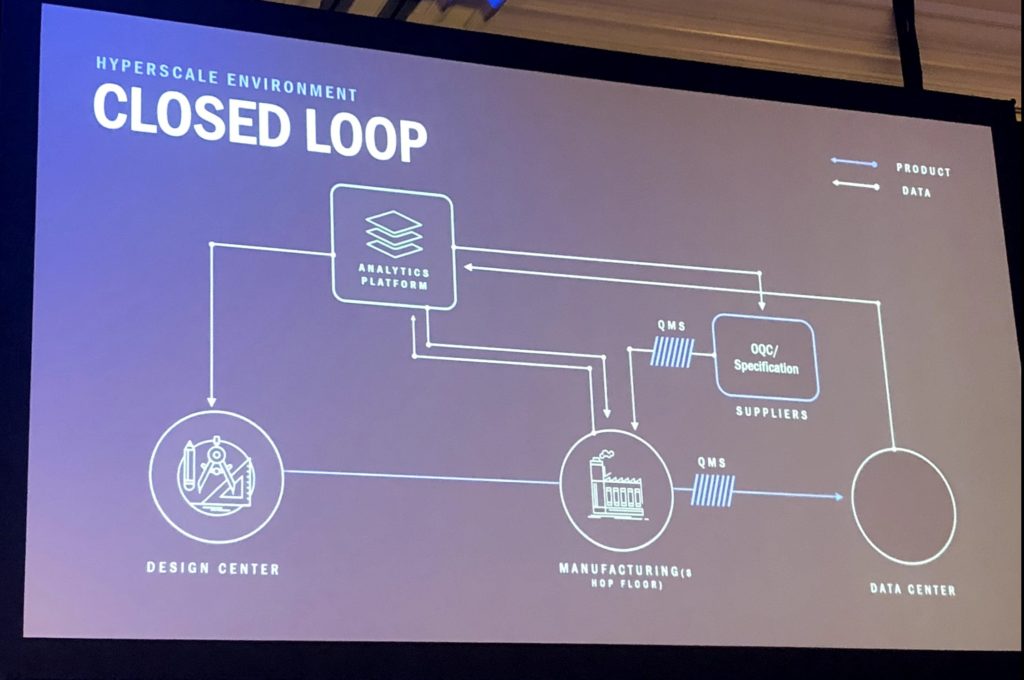

I want to share one of the most important, in my view, elements of their implementations – data flows. Check these slides.

Here is my take… Opposite to a traditional PLM database-centric approach, Facebooks presentation demonstrated that holistic PLM implementation relies on data flows and network of applications. It is not about locking data in a single database, but about routing data efficiently between multiple systems and business functions. It is an important element of new PLM realities. I look forward to learning more about what Facebook PLM is doing and share details in the future.

What is my conclusion? A successful modern PLM implementation relies on the network of information and availability of this information inside and outside of the organization. The interaction of business functions relies on the data and system to support such an environment is key for future PLM development. To build such systems manufacturing companies can combine multiple systems and build many integrations. Nothing wrong with such an approach. However, this is an opportunity for PLM vendors to find a set of new network platforms and technologies to support the demand of fast-growing companies in data flows. Just my thought…

Best, Oleg

Disclaimer: I’m co-founder and CEO of OpenBOM developing cloud-based bill of materials and inventory management tool for manufacturing companies, hardware startups, and supply chain. My opinion can be unintentionally biased.

The post The role of data flow in future PLM network platforms appeared first on Beyond PLM (Product Lifecycle Management) Blog.

Be the first to post a comment.