Digital Twin buzzword is trending and everyone in manufacturing is building some kind of Digital Twin. PLM folks might have their own definition of digital twine while you can already hear about multiple twins. The following Siemens PLM article interview with Jim Rask can give you bunch of technological buzzwords about digital twins. The quintessence of the definition is, of course, “true digital twin”.

A true digital twin is not a single model of the asset, even though it’s referred to as a singular “twin.” It consists of many mathematical models and virtual representations that comprehend the asset’s entire lifecycle – all the way from ideation, through realization and utilization – and all its constituent technologies, including electronics, software, mechanical, manufacturing and in-service performance. We refer to a set of three digital twins: Product, Production and Performance. Each of these digital twins consists of multiple virtual models appropriate for the given product and production system.

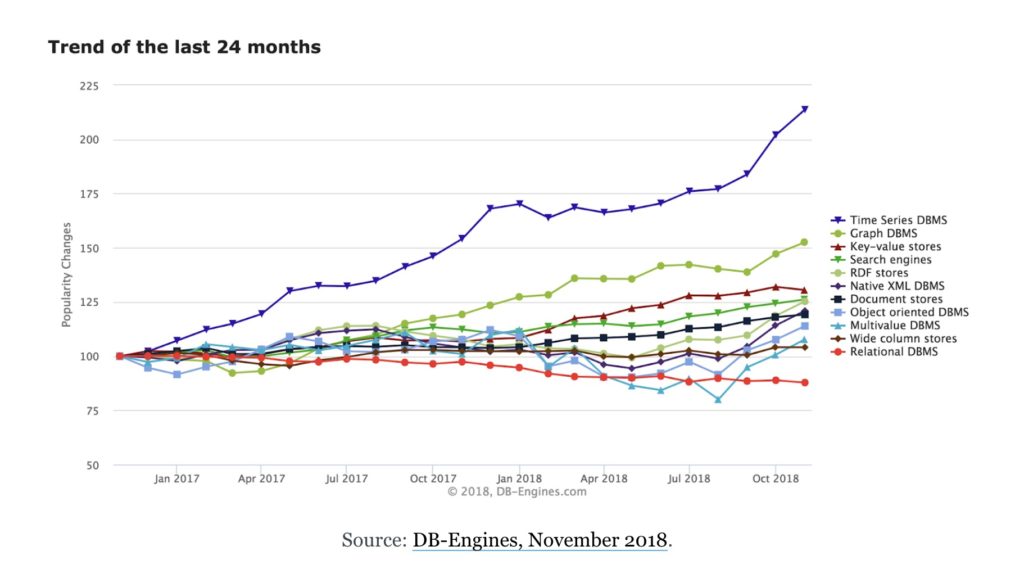

Reading about all these twins made me think about what is a source of true twin. Ideally whatsoever model I will create and even call it true digital twin, it will be as good as the data from real product will confirm its “twin” status. Which brought me to think about technology, which is few decades old and called TSDB – Time Series Database.

A time series database allows users to create, enumerate, update and destroy various time series and organize them. The server often supports a number of basic calculations that work on a series as a whole, such as multiplying, adding, or otherwise combining various time series into a new time series.[citation needed] They can also filter on arbitrary patterns such as time ranges, low value filters, high value filters, or even have the values of one series filter another.[citation needed] Some TSDBs also build in additional statistical functions that are targeted to time series data.[citation needed]

Time Scale blog gives a very interesting perspective on time scale databases. And I found that this relatively old technology is booming.

Today, data is being collected continuously by every technological device. This has created a growing demand for time series databases that can store time-stamped information, which become a source of meaningful insight over time. Enterprise technology, especially, benefits from the growing adoption of TSDBs. According to estimates, the total data produced is expected to grow nearly fivefold to 175 zettabytes by 2025. Whether its data from sensors monitoring energy infrastructure in a remote environment or data on the performance of a software application running in a public cloud, a majority of it will be the time-stamped data types that work especially well with time series databases

So, how is that related to PLM and Digital Twin? Here is the thing – TSDB is the source of truth. The data sets that can help you to validate if your digital twin is actually a twin. Second reason these technology can become beneficial is because it can be a source of data for PLM systems to aggregate and bring it in a way of PLM value proposition (intelligence). Another way to think about it is to think about data management service (even called PLM) that is capable to collect time series databases in a form of meaningful changes – think about BOM changes collection that might have connected to TSDB to reflect how changes are impacting system performance.

What is my conclusion? It is a time for PLM vendors to acquire some TSDB technology or vendor to get more insight on operational data about products. Business value today is measured by the amount of intelligence you can bring to decision makers. More insight and value – more beneficial (and valuable) systems are today. Just my thoughts…

Best, Oleg

Disclaimer: I’m co-founder and CEO of OpenBOM developing cloud based bill of materials and inventory management tool for manufacturing companies, hardware startups and supply chain. My opinion can be unintentionally biased

The post PLM, Digital Twin and Time Series Databases appeared first on Beyond PLM (Product Lifecycle Management) Blog.

Be the first to post a comment.