Data was always in the center of any PDM and PLM applications. The ability of PLM system to manage complex structures and information assets was one of the most important step of PLM system development and evaluation.

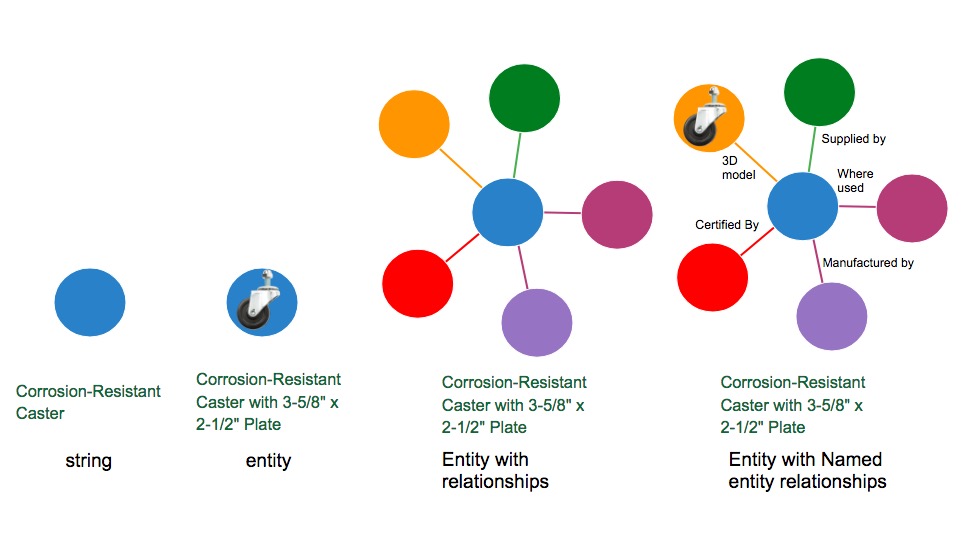

In a nutshell, any PLM system reminds you some sort of object oriented data structure where you deal with objects of different types, attributes and relationships. The terminology about it was a bit messy and PLM vendors did really poor job setting it up straight. Objects, Items, Classes, Types, Properties, Links, Dependencies, References. Then more specific Parts, Assemblies, Documents, etc. On top of basic elements of data models, you’ve got templates, best practices, industry templates. And then of course, standards and more standards. Data models collided between versions, configurations and templates.

The battleground of PLM vendors for the last decades was to provide the most reliable and flexible data model and application layer to run on top of data management layer. PLM vendors had mixed success in this flexible data modeling race. The complexity of systems has grown significantly. PLM data management technologies have very long lifecycle. Eventually, the companies that had a better core architecture and were less involved into mergers and acquisitions are in a better position to offer resilient data management solution.

However, as far as PLM data management layer goes, it is still very limited because it is managing the information controlled by a specific PLM server in a specific organization. The most sophisticated data model is a just a description of data schema with bunch of data sets.

As machine learning became the hot new technology for many industries. One of the most inspiring things for me connecting to machine learning in manufacturing and PLM is “knowledge graph”. Knowledge graphs have been embraced by few vendors. The most notable work was done by Google, which is can be credited for popularizing the term. At the first place Knowledge Graphs became popular and connected to semantics. The work for knowledge graph was partially based on Freebase – general-purpose knowledge base Google acquired in 2010. Today, you can see Knowledge Graph uses schema.org – a collaborative effort between multiple tech search vendors to develop a schema to tagging content.

Size and scope is another thing that can set knowledge graph apart of traditional data model and PLM database. The last one is just a reflection of information about the product(s) in a specific company. They are using hand-curated and limited to control data about product development lifecycle. It is changing these days and PLM is expanding to other domains in an organization. While it is a very exciting to see PLM growing, the fundamental limitation of PLM databases is to reach, model and infer data elements and relationships beyond the scope of a specific company. Think about manufacturing networks as a target for knowledge graphs.

What is my conclusion? We are approaching the moment of time in manufacturing when local PLM databases cannot serve the purpose of supporting businesses with insight and decision support needed to lead companies. Modern manufacturing environment is extremely complex and intertwined. New era of connected products brings next level of complexity to engineering and manufacturing. All together will require new PLM and data management technologies to support it. Just my thoughts…

Best, Oleg

Disclaimer: I’m co-founder and CEO of OpenBOM developing cloud based bill of materials and inventory management tool for manufacturing companies, hardware startups and supply chain. My opinion can be unintentionally biased

The post From PLM data to manufacturing knowledge graphs appeared first on Beyond PLM (Product Lifecycle Management) Blog.

Be the first to post a comment.